Do columns in the table (and their types) match a predefined schema?

Source:R/col_schema_match.R

col_schema_match.RdThe col_schema_match() validation function, the expect_col_schema_match()

expectation function, and the test_col_schema_match() test function all

work in conjunction with a col_schema object (generated through the

col_schema() function) to determine whether the expected schema matches

that of the target table. The validation function can be used directly on a

data table or with an agent object (technically, a ptblank_agent object)

whereas the expectation and test functions can only be used with a data

table.

The validation step or expectation operates over a single test unit, which is

whether the schema matches that of the table (within the constraints enforced

by the complete, in_order, and is_exact options). If the target table

is a tbl_dbi or a tbl_spark object, we can choose to validate the column

schema that is based on R column types (e.g., "numeric", "character",

etc.), SQL column types (e.g., "double", "varchar", etc.), or Spark SQL

types (e.g,. "DoubleType", "StringType", etc.). That option is defined in

the col_schema() function (it is the .db_col_types argument).

There are options to make schema checking less stringent (by default, this

validation operates with highest level of strictness). With the complete

option set to FALSE, we can supply a col_schema object with a partial

inclusion of columns. Using in_order set to FALSE means that there is no

requirement for the columns defined in the schema object to be in the same

order as in the target table. Finally, the is_exact option set to FALSE

means that all column classes/types don't have to be provided for a

particular column. It can even be NULL, skipping the check of the column

type.

Usage

col_schema_match(

x,

schema,

complete = TRUE,

in_order = TRUE,

is_exact = TRUE,

actions = NULL,

step_id = NULL,

label = NULL,

brief = NULL,

active = TRUE

)

expect_col_schema_match(

object,

schema,

complete = TRUE,

in_order = TRUE,

is_exact = TRUE,

threshold = 1

)

test_col_schema_match(

object,

schema,

complete = TRUE,

in_order = TRUE,

is_exact = TRUE,

threshold = 1

)Arguments

- x

A pointblank agent or a data table

obj:<ptblank_agent>|obj:<tbl_*>// requiredA data frame, tibble (

tbl_dfortbl_dbi), Spark DataFrame (tbl_spark), or, an agent object of classptblank_agentthat is commonly created withcreate_agent().- schema

The table schema

obj:<col_schema>// requiredA table schema of type

col_schemawhich can be generated using thecol_schema()function.- complete

Requirement for columns specified to exist

scalar<logical>// default:TRUEA requirement to account for all table columns in the provided

schema. By default, this isTRUEand so that all column names in the target table must be present in the schema object. This restriction can be relaxed by usingFALSE, where we can provide a subset of table columns in the schema.- in_order

Requirement for columns in a specific order

scalar<logical>// default:TRUEA stringent requirement for enforcing the order of columns in the provided

schema. By default, this isTRUEand the order of columns in both the schema and the target table must match. By setting toFALSE, this strict order requirement is removed.- is_exact

Requirement for column types to be exactly specified

scalar<logical>// default:TRUEDetermines whether the check for column types should be exact or even performed at all. For example, columns in R data frames may have multiple classes (e.g., a date-time column can have both the

"POSIXct"and the"POSIXt"classes). If usingis_exact == FALSE, the column type in the user-defined schema for a date-time value can be set as either"POSIXct"or"POSIXt"and pass validation (with this column, at least). This can be taken a step further and usingNULLfor a column type in the user-defined schema will skip the validation check of a column type. By default,is_exactis set toTRUE.- actions

Thresholds and actions for different states

obj:<action_levels>// default:NULL(optional)A list containing threshold levels so that the validation step can react accordingly when exceeding the set levels for different states. This is to be created with the

action_levels()helper function.- step_id

Manual setting of the step ID value

scalar<character>// default:NULL(optional)One or more optional identifiers for the single or multiple validation steps generated from calling a validation function. The use of step IDs serves to distinguish validation steps from each other and provide an opportunity for supplying a more meaningful label compared to the step index. By default this is

NULL, and pointblank will automatically generate the step ID value (based on the step index) in this case. One or more values can be provided, and the exact number of ID values should (1) match the number of validation steps that the validation function call will produce (influenced by the number ofcolumnsprovided), (2) be an ID string not used in any previous validation step, and (3) be a vector with unique values.- label

Optional label for the validation step

vector<character>// default:NULL(optional)Optional label for the validation step. This label appears in the agent report and, for the best appearance, it should be kept quite short. See the Labels section for more information.

- brief

Brief description for the validation step

scalar<character>// default:NULL(optional)A brief is a short, text-based description for the validation step. If nothing is provided here then an autobrief is generated by the agent, using the language provided in

create_agent()'slangargument (which defaults to"en"or English). The autobrief incorporates details of the validation step so it's often the preferred option in most cases (where alabelmight be better suited to succinctly describe the validation).- active

Is the validation step active?

scalar<logical>// default:TRUEA logical value indicating whether the validation step should be active. If the validation function is working with an agent,

FALSEwill make the validation step inactive (still reporting its presence and keeping indexes for the steps unchanged). If the validation function will be operating directly on data (no agent involvement), then any step withactive = FALSEwill simply pass the data through with no validation whatsoever. Aside from a logical vector, a one-sided R formula using a leading~can be used with.(serving as the input data table) to evaluate to a single logical value. With this approach, the pointblank functionhas_columns()can be used to determine whether to make a validation step active on the basis of one or more columns existing in the table (e.g.,~ . %>% has_columns(c(d, e))).- object

A data table for expectations or tests

obj:<tbl_*>// requiredA data frame, tibble (

tbl_dfortbl_dbi), or Spark DataFrame (tbl_spark) that serves as the target table for the expectation function or the test function.- threshold

The failure threshold

scalar<integer|numeric>(val>=0)// default:1A simple failure threshold value for use with the expectation (

expect_) and the test (test_) function variants. By default, this is set to1meaning that any single unit of failure in data validation results in an overall test failure. Whole numbers beyond1indicate that any failing units up to that absolute threshold value will result in a succeeding testthat test or evaluate toTRUE. Likewise, fractional values (between0and1) act as a proportional failure threshold, where0.15means that 15 percent of failing test units results in an overall test failure.

Value

For the validation function, the return value is either a

ptblank_agent object or a table object (depending on whether an agent

object or a table was passed to x). The expectation function invisibly

returns its input but, in the context of testing data, the function is

called primarily for its potential side-effects (e.g., signaling failure).

The test function returns a logical value.

Supported Input Tables

The types of data tables that are officially supported are:

data frames (

data.frame) and tibbles (tbl_df)Spark DataFrames (

tbl_spark)the following database tables (

tbl_dbi):PostgreSQL tables (using the

RPostgres::Postgres()as driver)MySQL tables (with

RMySQL::MySQL())Microsoft SQL Server tables (via odbc)

BigQuery tables (using

bigrquery::bigquery())DuckDB tables (through

duckdb::duckdb())SQLite (with

RSQLite::SQLite())

Other database tables may work to varying degrees but they haven't been formally tested (so be mindful of this when using unsupported backends with pointblank).

Actions

Often, we will want to specify actions for the validation. This argument,

present in every validation function, takes a specially-crafted list object

that is best produced by the action_levels() function. Read that function's

documentation for the lowdown on how to create reactions to above-threshold

failure levels in validation. The basic gist is that you'll want at least a

single threshold level (specified as either the fraction of test units

failed, or, an absolute value), often using the warn_at argument. Using

action_levels(warn_at = 1) or action_levels(stop_at = 1) are good choices

depending on the situation (the first produces a warning, the other

stop()s).

Labels

label may be a single string or a character vector that matches the number

of expanded steps. label also supports {glue} syntax and exposes the

following dynamic variables contextualized to the current step:

"{.step}": The validation step name

The glue context also supports ordinary expressions for further flexibility

(e.g., "{toupper(.step)}") as long as they return a length-1 string.

Briefs

Want to describe this validation step in some detail? Keep in mind that this

is only useful if x is an agent. If that's the case, brief the agent

with some text that fits. Don't worry if you don't want to do it. The

autobrief protocol is kicked in when brief = NULL and a simple brief will

then be automatically generated.

YAML

A pointblank agent can be written to YAML with yaml_write() and the

resulting YAML can be used to regenerate an agent (with yaml_read_agent())

or interrogate the target table (via yaml_agent_interrogate()). When

col_schema_match() is represented in YAML (under the top-level steps key

as a list member), the syntax closely follows the signature of the validation

function. Here is an example of how a complex call of col_schema_match() as

a validation step is expressed in R code and in the corresponding YAML

representation.

R statement:

agent %>%

col_schema_match(

schema = col_schema(

a = "integer",

b = "character"

),

complete = FALSE,

in_order = FALSE,

is_exact = FALSE,

actions = action_levels(stop_at = 1),

label = "The `col_schema_match()` step.",

active = FALSE

)YAML representation:

steps:

- col_schema_match:

schema:

a: integer

b: character

complete: false

in_order: false

is_exact: false

actions:

stop_count: 1.0

label: The `col_schema_match()` step.

active: falseIn practice, both of these will often be shorter as only the schema

argument requires a value. Arguments with default values won't be written to

YAML when using yaml_write() (though it is acceptable to include them with

their default when generating the YAML by other means). It is also possible

to preview the transformation of an agent to YAML without any writing to disk

by using the yaml_agent_string() function.

Examples

For all examples here, we'll use a simple table with two columns: one

integer (a) and the other character (b). The following examples will

validate that the table columns abides match a schema object as created by

col_schema().

tbl <-

dplyr::tibble(

a = 1:5,

b = letters[1:5]

)

tbl

#> # A tibble: 5 x 2

#> a b

#> <int> <chr>

#> 1 1 a

#> 2 2 b

#> 3 3 c

#> 4 4 d

#> 5 5 eCreate a column schema object with the helper function col_schema() that

describes the columns and their types (in the expected order).

schema_obj <-

col_schema(

a = "integer",

b = "character"

)

schema_obj

#> $a

#> [1] "integer"

#>

#> $b

#> [1] "character"

#>

#> attr(,"class")

#> [1] "r_type" "col_schema"A: Using an agent with validation functions and then interrogate()

Validate that the schema object schema_obj exactly defines the column names

and column types. We'll determine if this validation has a failing test unit

(there is a single test unit governed by whether there is a match).

agent <-

create_agent(tbl = tbl) %>%

col_schema_match(schema = schema_obj) %>%

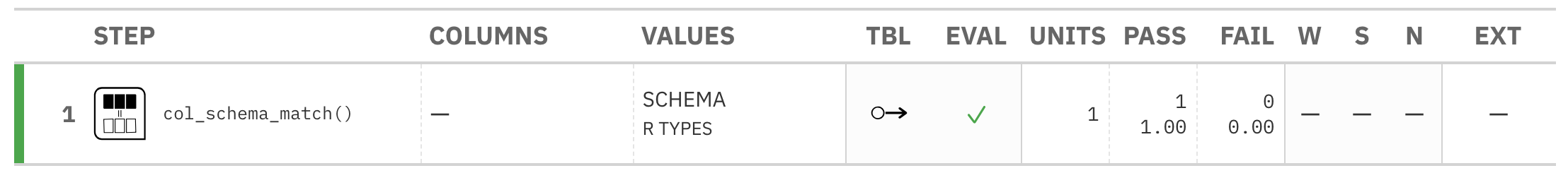

interrogate()Printing the agent in the console shows the validation report in the

Viewer. Here is an excerpt of validation report, showing the single entry

that corresponds to the validation step demonstrated here.

B: Using the validation function directly on the data (no agent)

This way of using validation functions acts as a data filter. Data is passed

through but should stop() if there is a single test unit failing. The

behavior of side effects can be customized with the actions option.

tbl %>% col_schema_match(schema = schema_obj)

#> # A tibble: 5 x 2

#> a b

#> <int> <chr>

#> 1 1 a

#> 2 2 b

#> 3 3 c

#> 4 4 d

#> 5 5 eC: Using the expectation function

With the expect_*() form, we would typically perform one validation at a

time. This is primarily used in testthat tests.

expect_col_schema_match(tbl, scheam = schema_obj)D: Using the test function

With the test_*() form, we should get a single logical value returned to

us.

tbl %>% test_col_schema_match(schema = schema_obj)

#> [1] TRUESee also

Other validation functions:

col_count_match(),

col_exists(),

col_is_character(),

col_is_date(),

col_is_factor(),

col_is_integer(),

col_is_logical(),

col_is_numeric(),

col_is_posix(),

col_vals_between(),

col_vals_decreasing(),

col_vals_equal(),

col_vals_expr(),

col_vals_gt(),

col_vals_gte(),

col_vals_in_set(),

col_vals_increasing(),

col_vals_lt(),

col_vals_lte(),

col_vals_make_set(),

col_vals_make_subset(),

col_vals_not_between(),

col_vals_not_equal(),

col_vals_not_in_set(),

col_vals_not_null(),

col_vals_null(),

col_vals_regex(),

col_vals_within_spec(),

conjointly(),

row_count_match(),

rows_complete(),

rows_distinct(),

serially(),

specially(),

tbl_match()